Paper PDF: Li et al. - 2025 - Hello Again! LLM-powered Personalized Agent for Long-term Dialogue.pdf (NAACL 2025)

Paper Bib: Li, Hao, et al. Hello Again! LLM-Powered Personalized Agent for Long-Term Dialogue. arXiv:2406.05925, arXiv, 13 Feb. 2025. arXiv.org, https://doi.org/10.48550/arXiv.2406.05925.

Problem Statement

Most existing open-domain dialogue systems predominantly focus on brief single-session interactions, neglecting the real-world demands for long-term companionship and personalised interactions with chatbots Page 1: Abstract. Addressing this real-world need crucially involves event summary and persona management, which enable reasoning for appropriate long-term dialogue responses Page 1: Abstract. The paper aims to fill this gap by proposing a model-agnostic framework capable of maintaining long-term event memory and preserving persona consistency for extended, personalized interactions Page 1: Introduction.

Key Contributions

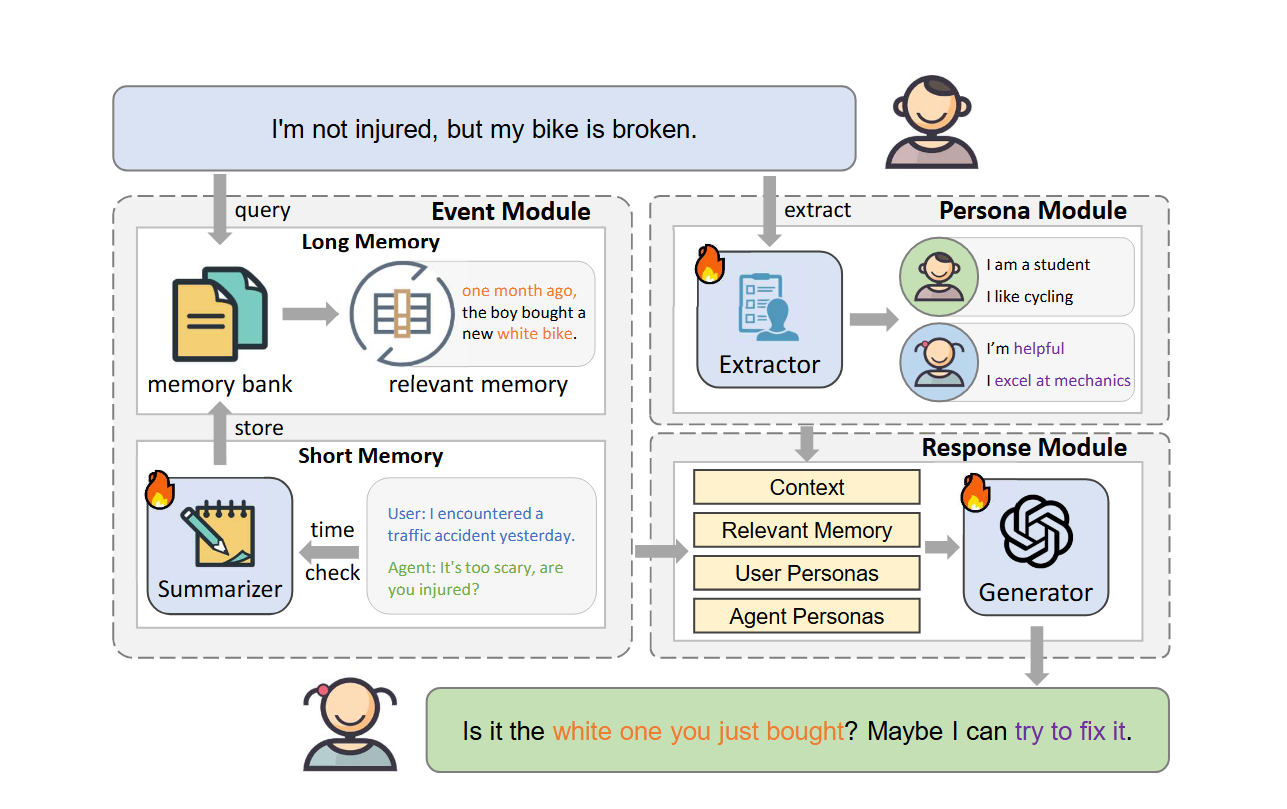

- LD-Agent Framework: The paper introduces LD-Agent, a model-agnostic Long-term Dialogue Agent framework, which comprises three independently tunable modules dedicated to event perception, persona extraction, and response generation Page 1: Abstract.

- Disentangled and Tunable Modules: It presents a disentangled approach where each module can be accurately tuned, allowing the framework to adapt to various dialogue tasks through module re-training Page 2: Contributions Summary.

- Enhanced Long-Term Memory Mechanisms: For the event memory module, the framework utilizes separate long-term and short-term memory banks to focus on historical and ongoing sessions, respectively Page 1: Abstract. A topic-based retrieval mechanism is introduced for the long-term memory bank, which stores vector representations of high-level event summaries from previous sessions, to enhance retrieval accuracy Page 1: Abstract, Page 2: Event Memory Perception Module.

- Dynamic Persona Management: The persona module performs dynamic persona modeling for both users and agents, with extracted personas being continuously updated and stored in a long-term persona bank Page 1: Abstract, Page 2: Persona Extraction Module.

- Empirical Validation: The effectiveness, generality (across LLMs and non-LLMs), and cross-domain capabilities of LD-Agent are empirically demonstrated on illustrative benchmarks such as MSC and Conversation Chronicles (CC) Page 1: Abstract, Page 2: Experimental Setup.

Methods and Experiments

The LD-Agent framework is built upon three primary modules:

- Event Memory Perception Module: This module is designed to perceive historical events for generating coherent responses across sessions Page 4: Event Perception.

- Long-term Memory: This component extracts and encodes events (occurrence times and brief summaries) from past sessions into vector representations stored in a memory bank () Page 4: Memory Storage. Event summaries are improved using instruction tuning on a rebuilt DialogSum dataset Page 4: Event Summary. Memory retrieval from this bank considers semantic relevance, topic overlap (calculated using nouns from conversations and summaries), and a time decay coefficient Page 4: Memory Retrieval. A semantic threshold () is applied to filter retrievals Page 4: Memory Retrieval.

- Short-term Memory: This component actively manages a dynamic dialogue cache () with timestamps for the current session Page 4: Short-term Memory. If the time interval since the last recorded utterance exceeds a threshold (), the cached dialogue is summarized by the long-term memory module and stored in the long-term bank, and the short-term cache is cleared Page 4: Short-term Memory Interaction with LTM.

- Dynamic Personas Extraction Module: This module maintains long-term persona consistency using a bidirectional user-agent modeling approach with a tunable persona extractor Page 5: Dynamic Personas Extraction. Personas are extracted from utterances (enhanced with LoRA-based instruction tuning on a dataset from MSC) and stored in respective persona banks () Page 5: Persona Extractor Tuning. A tuning-free LLM-based extraction using Chain-of-Thought is also an alternative Page 5: Tuning-free Persona Extraction.

- Response Generation Module: This module takes the new user utterance, retrieved relevant memories (m), short-term context (), and user/agent personas () as input to a response generator (G) to produce an appropriate response (r) Page 5: Response Generation Formula. The generator can be tuned on a dataset constructed using MSC and CC, incorporating outputs from the event and persona modules Page 5: Generator Tuning Dataset.

Experiments were conducted on multi-session datasets MSC and CC Page 6: Datasets. Baselines included ChatGPT, ChatGLM, BlenderBot, BART, and HAHT Page 6: Baselines. Automatic evaluation metrics were BLEU-N, ROUGE-L, METEOR, and ACC (for persona extractor) Page 6: Metrics. Human evaluations assessed coherence, fluency, and engagingness Page 6: Human Evaluation Metrics.

Critical Analysis

The authors explicitly state the following limitations Page 9: Limitations:

- Lacking Real-World Datasets: Current long dialogue datasets are typically synthetic (manually created or LLM-generated), which introduces a gap from real-world data Page 9: Lacking Real-World Datasets. The authors aim to validate their approach on real long-term dialogue data in the future Page 9: Lacking Real-World Datasets.

- Sophisticated Module Design: While LD-Agent provides a general framework, the module implementations employ relatively basic methods, suggesting that more sophisticated designs could be explored in the future Page 9: Sophisticated Module Design. Specific areas for future work include advanced long-term memory summarization, more accurate memory retrieval, improved personality extraction, and persona-based retrieval techniques Page 9: Sophisticated Module Design.

No other significant methodological flaws or major weaknesses are immediately apparent from the paper’s description; the framework is presented as modular and is evaluated across several dimensions.

Relatives and Insights

The LD-Agent’s approach to Long-Term Memory (LTM) provides several valuable insights and potential parallels for your dynamic, hierarchical Knowledge Graph (KG) based LTM project:

- Dual Memory System (Long-Term and Short-Term Banks):

- Relevance: LD-Agent’s separation of LTM (for historical session summaries) and STM (for current session context) is a common and practical design pattern Page 1: Abstract, Page 4: Event Perception. This separation can be directly mapped to your KG project, where the main KG acts as the persistent LTM, and a temporary cache or a dedicated, rapidly updated section of the KG could handle STM.

- Application to KG: The process described where the short-term memory cache is summarized and its contents are moved to the long-term bank upon exceeding a time threshold () Page 4: Short-term Memory Interaction with LTM offers a concrete mechanism for your “consolidation” operation. Information from STM could be abstracted or transformed into structured facts before being integrated into the stable, hierarchical layers of your KG.

- Event Summarization and Structured Representation in LTM:

- Relevance: LD-Agent’s LTM stores “vector representations of high-level event summaries” Page 2: Event Memory Perception Module. This emphasizes the need for abstracting raw interaction data for efficient long-term storage and retrieval.

- Application to KG: Your KG can inherently store structured event representations. Instead of just event summary vectors, you can define event schemas within your KG (e.g., event type, participants, time, location, associated facts). The tunable event summary module in LD-Agent, fine-tuned on DialogSum Page 4: Event Summary, suggests that you could develop or fine-tune LLMs to extract these structured event details specifically for populating your KG from dialogues.

- Enhanced LTM Retrieval Mechanisms:

- Relevance: LD-Agent’s LTM retrieval considers semantic relevance, topic overlap (using extracted nouns), and time decay Page 4: Memory Retrieval. This multi-faceted approach aims for more accurate retrieval.

- Application to KG: Your KG architecture allows for even more sophisticated retrieval strategies:

- The hierarchical nature of your KG can guide retrieval, allowing searches within specific contexts or levels of abstraction.

- Explicitly modeled temporal relationships and timestamps in the KG can support precise time-aware queries beyond simple decay.

- Graph pattern matching can identify complex event constellations or user behavior patterns.

- The “topic overlap” concept can be implemented by tagging KG nodes/subgraphs with topics or leveraging the KG’s ontology for thematic searching.

- Dynamic Persona Management as Long-Term Memory:

- Relevance: LD-Agent uses a “long-term persona bank” to store dynamically updated user and agent personas, crucial for personalized interactions Page 2: Persona Extraction Module.

- Application to KG: Your KG is ideally suited for representing rich, evolving user models. User attributes, preferences, inferred states, interaction history summaries, and even relationships with other entities can be nodes and edges within the KG. The methods for persona extraction (tunable extractor or LLM with CoT) Page 5: Dynamic Personas Extraction can serve as inspiration for how to populate and update these user profiles within your KG.

- Modularity and Tunability for LTM Operations:

- Relevance: LD-Agent’s modules (event perception, persona extraction) are independently tunable Page 1: Abstract.

- Application to KG: This reinforces the idea of having specialized components for interacting with your LTM KG. For example, you could have:

- An “Information Extraction/Consolidation LLM” fine-tuned to convert dialogue into KG-compatible facts/events.

- A “KG Query Generation LLM” (part of your router or reasoning LLM) specialized in translating natural language or internal states into KG queries.

- A “KG Reasoning Augmentation LLM” that processes KG query results for the final reasoning LLM.

Key Insights for Your KG-based LTM:

- Leverage Structure for Richer LTM: LD-Agent relies on summarized event vectors for its LTM. Your KG can provide a much richer, explicitly structured LTM, enabling more complex queries, better interpretability, and easier updates of specific facts.

- Explicit Temporal and Causal Reasoning: While LD-Agent incorporates time decay Page 4: Memory Retrieval, a KG can explicitly model temporal sequences and causal links between events, which is crucial for deeper long-term reasoning and user behavior understanding.

- Knowledge Update Granularity: Updating summaries in LD-Agent might be different from updating specific facts or relationships in a KG. Your KG design should consider granular updates, conflict resolution, and maintaining consistency as new information is added over long periods. This is a core aspect of a “dynamic” KG.

- Hierarchical Abstraction: LD-Agent’s event summaries are a form of abstraction. Your hierarchical KG can explicitly support multiple levels of abstraction, allowing the system to reason at different granularities (e.g., specific interactions vs. long-term trends), which is a unique advantage to explore.

By studying LD-Agent’s approach, you can gain insights into managing the flow of information into and out of a long-term memory system, handling event and persona data, and structuring the interaction between memory and generation modules. You can then adapt these concepts to fully exploit the structural and dynamic capabilities of your hierarchical KG.